On November 27th, I missed my flight to Bali.

The island’s highest volcano, Mount Agung, appeared to be on the brink of an eruption.

It wasn’t the lava or ashes in the sky that caused the trouble, though.

First, my airline charged me the full fare to get on the next available flight. When I arrived at my final destination, it turned out they’d lost my luggage, too. An hour later, I left the arrivals area to be greeted by a smiling man who’d come, uninvited, from my hotel to collect me — despite it being past 2am.

When I finally reached my hotel room, I found a pair of box-fresh pajamas next to a handwritten note, welcoming me and apologizing for my inconveniences.

I wake up 10 hours later. The buffet is closed, but they usher me to a table anyway, placing a steaming coffee in front of me with another smile.

After breakfast, I prepare myself for the inevitable barrage of calls to try and reclaim my luggage.

As I click the keycard into my room, something looks different.

My lost suitcase is waiting next to my bed.

Now, I won’t name names: let’s just say that I’ll be bad-mouthing this particular airline for the foreseeable future.

The hotel, on the other hand? It’s the way they intuitively understood my needs that will have me waxing lyrical to my friends and writing glowing reviews.

Clearly, these two companies define themselves very differently.

What defines our brand?

Here’s a clue: it’s not our brilliant marketing, our slick website, or even our awesome product. We can’t A/B test it.

Marty Neumeier, bestselling author of The Brand Gap, puts it perfectly:

“It isn’t what you say it is — it’s what they say it is.”

Our brand IS the sum of every single experience a person has with our company — online and offline.

Almost everything is replicable these days. We’re all in danger of having our ideas copied overnight, from our first line of code to the last line of our mission statement.

But nobody can steal our customer experience. This vague, intangible element is the one factor that keeps us unique.

As I outlined in my last story, delivering an outstanding customer experience starts with treating our customers as if they were our best friends.

This is more than a marketing tool. It’s a long-term, all-encompassing mindset; and it involves every single person on our team.

Satisfaction or happiness?

Aiming to keep our customers satisfied is nothing new.

But should customer satisfaction be our barometer for success?

With so many semi-identical companies jostling for attention online these days, customer satisfaction per se won’t make us stand out.

Satisfaction doesn’t guarantee loyalty. Satisfied customers leave companies every day.

And while acquisition results may keep us afloat, customer retention is the real driver of long-term growth.

Bill Macaitis, former Chief Marketing Officer of Slack, was not satisfied if someone signed up. Or when someone became a paying customer. He was only fully satisfied when someone was so happy with the service that they would recommend Slack to someone else.

Thus, we should go beyond delivering just satisfaction and instead, aim for happiness. Because happiness = loyalty.

Pro Tip

Enhance customer satisfaction and improve the experience with Jotform AI Agents by offering real-time assistance and personalized AI interactions.

How to measure happiness & other metrics

We can speculate on whether or not our customers are happy.

Or, we can ask them ourselves.

Naturally, this is where metrics and KPIs come in. These numbers are useful not as a miracle solution, but because they help us learn.

However, there’s endless data we could be tracking — and much of it will lead to more questions than answers.

And as I explained in my previous story, an over-reliance on data can cloud our judgement and even destroy our relationship with the customer.

When it comes to metrics, less is more.

Instead of drowning in graphs and spreadsheets, we can select a few baseline tactics and set them on a company-wide basis.

But there isn’t a tried-and-tested set of calculations that works for everyone, nor a set of figures we should aim for. What counts as major success for a small startup could signal bankruptcy for a global conglomerate.

The value of this data depends purely on the context in which it exists.

So, before setting any metrics, we should ask ourselves:

- How does this contribute to our company’s long-term vision?

- What are we trying to achieve/change on the basis of this data?

- What objective are we measuring this against?

It’s also important to consider the balance between short and long-term metrics. Certain results might capture a snapshot of customer happiness, but these do not necessarily translate towards success in the long run.

Short-term metrics have their place, but they will be effective only when integrated with long-term tactics in the wider framework of the customer journey.

Oh, and measuring is completely meaningless — unless it’s used to drive action (and not distraction).

Below are some metrics worth keeping an eye on.

1. NPS® (Net Promoter Score®)

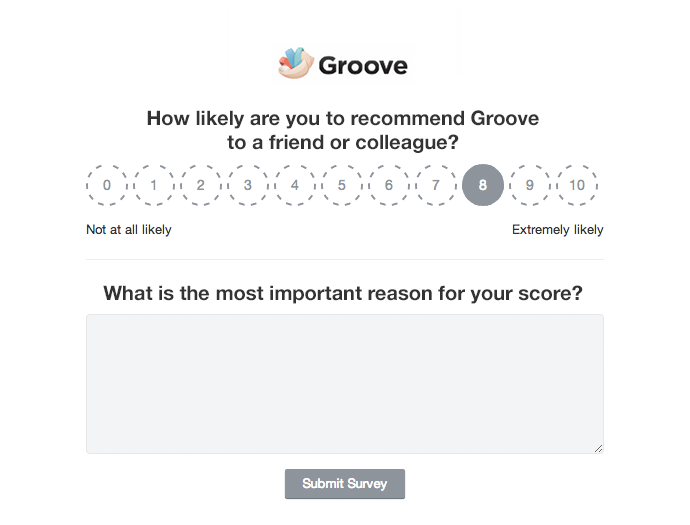

“The one number you need to grow”, NPS is a widely-regarded to be the most valuable indicator of long-term happiness — and the simplest to use.

It is a customer loyalty metric that asks:

1. How likely is it that you would recommend our company/product/service to a friend or colleague?

2. What is the reason for your score?

The first question — with a scoring scale from 1 to 10 — forms the basis of NPS:

- Those who indicate a 9 or 10 are “promoters”.

- Those who indicate a 7 or 8 are “passives”.

- The rest are “detractors”.

Our NPS is calculated by subtracting our percentage of detractors from our percentage of promoters.

Ideally, we should see our NPS score curve up over time.

The second question (“What is the reason for your score?”) digs deeper into why customers scored the way they did. Qualitative analysis of answers to both these questions should help us to uncover:

- What promoters love about our company

- What is preventing our passives from becoming promoters

- What is making our detractors unhappy, and how to fix it

NPS is about overall, long-term happiness, which means it’s important to send out surveys fairly regularly.

Best practise recommends three points in the customer journey: 1 month, 6 months and 1 year.

How to set up Net Promoter Score:

You can build an NPS form from scratch using Jotform’s free form builder, or start with a ready-to-use NPS template.

Once you’ve started receiving answers, you can begin to calculate your Net Promoter Score.

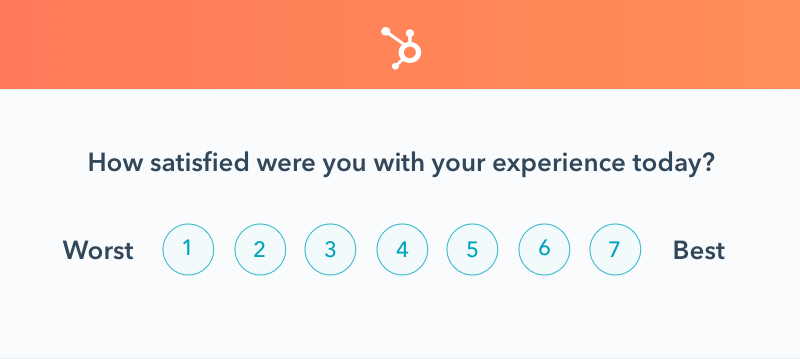

2. CSAT (Customer Satisfaction)

While NPS focuses on long-term happiness, CSAT is a key metric in calculating short-term success.

CSAT tends to measure customer happiness at specific touchpoints (e.g., after a conversation, or the introduction of a new service, or the resolution of a problem).

It can help us identify which parts of the customer journey contribute towards a better experience.

CSAT asks questions like “How would you rate your recent experience with X?”

Customers then rate their experience on a scale.

The scale can be 1–3, 1–5, or 1–10; there isn’t a universal best practice as to which survey scale to use.

The answer can be simplified further by asking “Were you satisfied with your experience?”, with customers responding either YES/NO.

If customers have selected ‘NO’ or a low-ranking number, we can provide the option for them to explain their response.

How to set up Customer Satisfaction Score:

Setting up the right CSAT depends on what you exactly want to measure.

You can get started with a simple survey template, or focus on measuring multiple touchpoints using a more complex template.

You can also make a combination of asking open-ended and scale questions here or build your CSAT from scratch using the free form builder.

3. CES (Customer Effort Score)

CES is another popular short-term metric that enables us to measure customer happiness with one question.

According to research by CEB, the creators of the Customer Effort Score:

Service organizations create loyal customers primarily by reducing customer effort — i.e. helping them solve their problems quickly and easily — not by delighting them in service interactions”.

CES measures the amount of effort that a customer has to put in during an interaction with our brand — whether it’s a purchase, a conversation or the pursuit of information.

Setting up a CES survey follows a similar framework to CSAT, with the primary statement being: “The company made it easy for me to resolve my issue”.

Users can then rate their effort on a scale of “strongly disagree” to “strongly agree”.

Here is a quick template you can use to set up and measure Customer Effort Score.

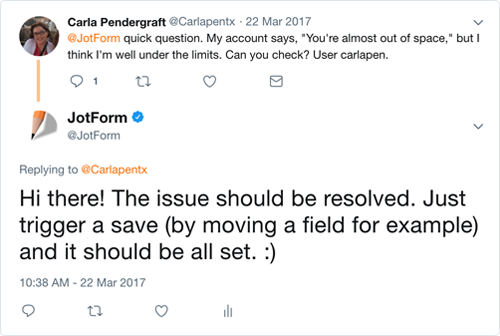

4. Response time

Response time can be a dilemma.

From a customer’s point of view, the faster the better. They would rather a quick reply — even if it doesn’t solve their query — than an informative yet delayed one.

But with the scarce resources of a startup, response time is often the first place we make trade-offs.

Here is something to focus on: the 90th percentile value. This is the maximum time 90% of our customers have to wait before they get a response.

To calculate this, we can:

- Sort the our responses in order of time.

- Remove the top 10% instances.

- The highest value left is the 90th percentile.

Once we know the percentile, we should aim to reduce it.

Of course, there will always be users in the 100th percentile who experience delays, but aiming to reduce it for 90% of users is a great start.

We can categorize these calculations by medium — email, social media, phone, live chat etc — or simply make this an overarching metric.

It’s also worth keeping in mind that customers will have varying expectations depending on their channel for contacting us.

According to Jay Baer, “42% of consumers complaining on social media expect a 60 minute response time.” But when it comes to email, 50% of users expect a reply within a day.

The power that a lightspeed response time can have on long-term happiness shouldn’t be underestimated.

For instance, HotelTonight maintains a 24/7 response time of fewer than 10 minutes; this has helped the company retain a loyal customer base and has been a key driver of the company’s explosive growth.

Pro Tip

Looking to improve email response times without sacrificing authenticity? Jotform’s Gmail Agent helps teams draft on-brand replies instantly using AI trained on past conversations and tone—so every customer email feels personal, consistent, and quick.

5. Total Conversations

Another simple yet effective metric is a count of every interaction our team has conducted with our customers over a period of time.

Tracking this long-term can provide powerful insight on which times of the day are most busy, which issues continue to crop up, and so on.

We can then break this down even further into conversations-per-teammate, and conversations per-teammate-per-day.

With a greater knowledge of our support trends, we can understand where team members may be being overworked and need backup, and stagger shifts or workloads if necessary.

6. Targeted feedback

Targeted feedback is one of the most valuable long-term inputs we can receive; especially if it’s a large amount collected over a long period of time. A few things to keep in mind:

- It should be simple and straightforward for customers to give us feedback, given that most of them will be short on time and easily distracted.

- We must only ask for feedback when we are prepared to act on it. Without action, the signal we send out is that we don’t care what our customer has to say.

- Perhaps we think some of our customers are just plain wrong. But we shouldn’t dismiss their opinions. Their ‘insane criticisms’ might simply be a finger pointing at our blind spots.

- It’s important to tailor feedback groups in-line with what we want to improve. Sending surveys to mass groups will only frustrate users and dilute our insights.

Adding a feedback button to your site makes collecting targeted customer feedback quick and easy (here are a few reasons why you should consider adding that button soon).

Just remember to think: am I asking the right customers the right question, at the right time, in the right way?

The bottom line

Knockout results on a spreadsheet are great — but they aren’t everything.

Remember that intention doesn’t equal action; just because someone says they would recommend us, it doesn’t mean they actually will.

And no matter how cleverly we craft our offering, there’s no guarantee that users will react to it the way we expect or hope.

What we can ensure, however, is that all users will experience the ‘friend-of-mine’ approach, no matter where or how they interact with our brand; they could be on a customer support call, watching an online ad or reading our legal terms of service.

Because ultimately, customer acquisition and customer retention all boil down to the same thing: customer experience. It’s a chicken-and-egg way of thinking.

That’s why every single person at our company influences the experience our brand delivers — and why every single person at our company is responsible for growth.

Therefore, metrics should be set to ensure the ‘friend-of-mine’ approach runs across the board, and to encourage each team member to take personal ownership of the user experience.

Being completely customer-centric is no easy feat. But it’s the first step in creating an environment where our users wield growing power.

So before we start tallying up numbers, we must remember the value of 2am car journeys, handwritten notes and lost-and-found luggage — whatever our version of this may be.

Happiness and experience can’t be measured; but if we make them our priority, we will reap the benefits tenfold.

Net Promoter®, NPS®, NPS Prism®, and the NPS-related emoticons are registered trademarks of Bain & Company, Inc., NICE Systems, Inc., and Fred Reichheld. Net Promoter ScoreSM and Net Promoter SystemSM are service marks of Bain & Company, Inc., NICE Systems, Inc., and Fred Reichheld.

Send Comment: